TIMWOODS Needs a T. The Ninth Waste of Lean is Tokens.

Taiichi Ohno identified seven wastes. The West added an eighth. AI demands a ninth.

Token prices have fallen roughly 280 times over the past two years. Total enterprise AI spend has risen 320% in the same period (Oplexa). The unit cost is collapsing but the total bill keeps climbing.

The AI industry calls this the inference cost paradox. It should sound familiar to anyone who has watched a factory invest in faster machines and somehow end up with higher costs. The resource got cheaper. The process around it did not get leaner. And so the waste scaled with the volume.

Lean manufacturing has a name for this kind of problem. In fact, it has eight of them.

The framework that keeps evolving

In 1948, a Toyota engineer named Taiichi Ohno began cataloguing the ways a factory wastes money without realising it. Over the next three decades, he identified seven categories of waste, or muda: Transportation, Inventory, Motion, Waiting, Overproduction, Overprocessing, and Defects. Together they formed the backbone of the Toyota Production System and, eventually, of lean manufacturing as we know it.

The acronym TIMWOOD may sound like an avuncular neighbour. In practice, these are the insidious factors lurking inside an organisation, quietly eroding your margin and slowing your growth.

The framework was remarkably durable. It survived the jump from Japanese automotive production to Western manufacturing, then to healthcare, logistics, software development, and dozens of other sectors. But when lean practitioners in the West started applying Ohno's system in the 1990s, they noticed something the factory-floor framework had missed. Organisations were wasting the knowledge, creativity, and problem-solving capacity of their own people. The eighth waste, Skills (or non-utilised talent), was added. TIMWOOD became TIMWOODS.

That addition matters for what I want to argue here. The eighth waste was not a correction of Ohno's thinking. It was a recognition that a new context had surfaced a new category of resource that the original framework did not account for. Ohno was solving for physical production systems. The Western practitioners who added Skills were solving for organisations where the people on the line held information that management had not yet learned to use, or at the very least had not developed a framework for their effective deployment. Slapping a T on the end of an acronym is clunky. But lean is not a methodology of orthodox rigidity. It is about continuous improvement, and that includes improving the framework itself.

I think we are at the same inflection point again. And the resource the framework is missing is tokens.

What tokens are and why they cost money

If you have used ChatGPT, Claude, Gemini, or any large language model in the last two years, you have consumed tokens. A token is the basic unit of input and output that an AI model processes. Roughly speaking, one token equals about four characters of English text, or three-quarters of a word. Every question you ask, every document you upload, every response the model generates, all of it is measured in tokens. And all of it costs money.

For individual users on subscription plans, this cost is largely invisible. You pay a monthly fee and the token consumption happens in the background. But for businesses using AI through APIs, through embedded tools, or at any kind of scale, tokens are a direct and increasingly significant operating cost. And as visual models, world models, and multimodal AI continue to develop, the tokenisation of vastly larger and more complex data sets will push that cost further still.

According to Deloitte, AI is now the fastest-growing expense in corporate technology budgets. Enterprise cloud computing bills rose 19% in 2025, driven largely by generative AI workloads. The average enterprise AI budget has grown from $1.2 million per year in 2024 to $7 million in 2026. Nearly half of leaders expect it will take up to three years to see ROI from basic AI automation, and only 28% of global finance leaders report clear, measurable value from their investments.

The cost is real but the visibility is not. That gap is exactly where the waste hides.

Why this matters beyond Silicon Valley

At this point you might reasonably ask: is this a problem that affects anyone outside of large technology companies?

Not yet, for most businesses. But the trajectory is clear, and the signal from the top of the industry is unambiguous. To deny it would be the equivalent of sitting in the late 1990s and insisting that personal computing would not fundamentally change the way we work and live. But even this sentence was written and read in pixels, not print.

At Nvidia's GTC conference in March 2026, CEO Jensen Huang proposed that engineers earning $500,000 a year should be consuming at least $250,000 worth of AI tokens annually. He compared an employee who does not use AI to a chip designer who refuses to use CAD tools and insists on pencil and paper. He said that if a $500,000 engineer's annual token bill came in at $5,000, he would be "deeply alarmed."

Let's stick a pin in Huang's undeniable profit incentive for a moment. He is the CEO of the company that sells the GPUs powering all of this. His enthusiasm for heavy token consumption is not entirely disinterested. But set aside the specific numbers, which apply to elite Silicon Valley engineers and not to most UK businesses. The underlying logic is significant. Huang is treating token consumption as a measure of productivity, a resource that should be actively managed, budgeted, and optimised. Nvidia is reportedly trying to spend around $2 billion a year on tokens for its engineering workforce alone.

This is the direction of travel. Tokens are becoming an operational input, like energy, raw materials, or labour hours. And once a resource becomes an operational input, lean thinking has something to say about how you manage it.

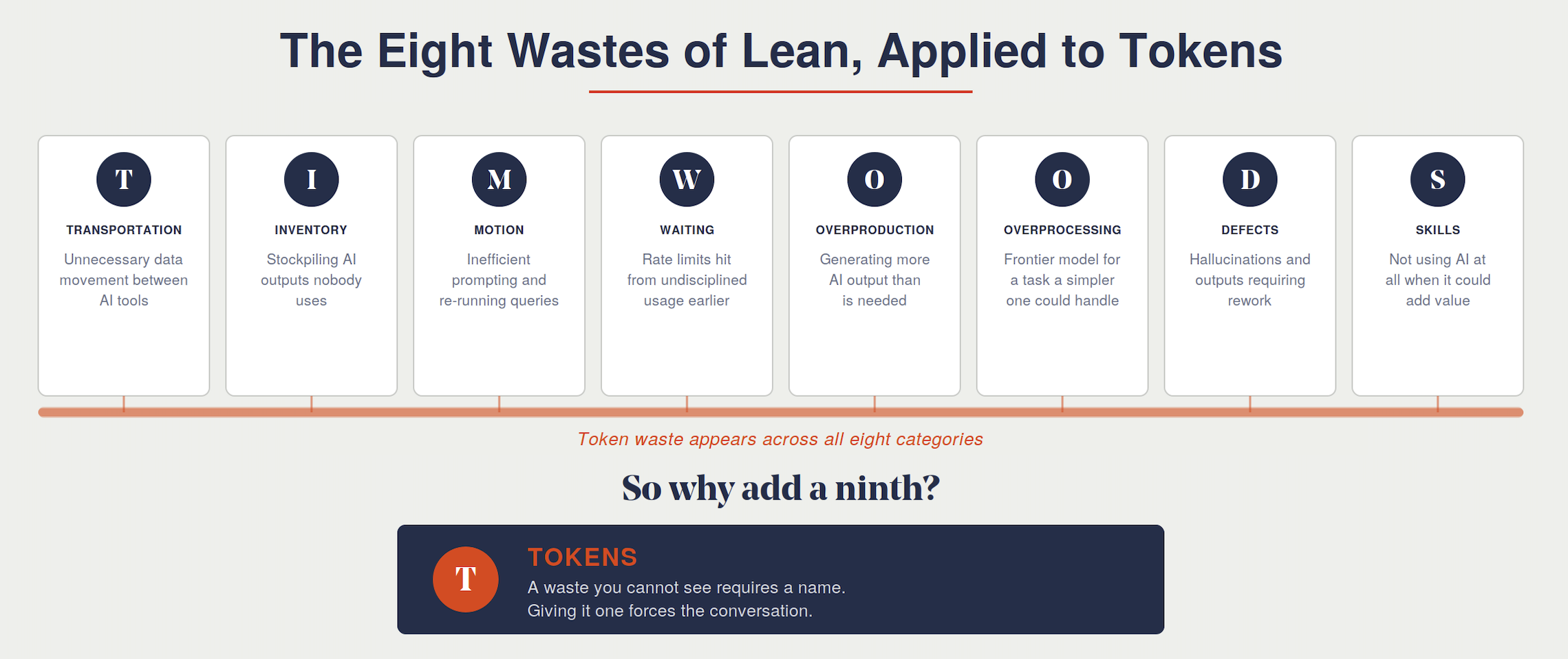

The eight wastes, applied to tokens

Before proposing Tokens as a standalone ninth waste, it is worth seeing how token waste already shows up across the existing eight categories. This is not a theoretical exercise. Every one of these is happening in businesses right now.

Transportation. Unnecessary movement of data between AI tools. Copying content out of one platform, reformatting it, and pasting it into another. Re-uploading the same documents to different models because the workflow was not designed to avoid it. Every redundant data transfer consumes tokens and adds nothing.

Inventory. Stockpiling AI-generated content that nobody uses. Generating reports, analyses, summaries, and drafts that sit in folders unread. The AI equivalent of producing stock that goes straight to the warehouse shelf. The tokens were consumed. The value was never realised.

Motion. Inefficient prompting. Running the same query multiple times because the first attempt was vague. Iterating through six versions of a prompt when a well-structured first attempt would have landed it in two. Every unnecessary iteration is wasted motion, consuming tokens and time without adding value.

Waiting. Rate limits hit at peak usage because the budget was consumed during low-value tasks earlier in the day. Models queuing because prompts were larger than they needed to be. Time spent waiting for responses that could have been faster with a smaller, better-targeted request. I have experienced this one personally: burning through usage allowance on low-priority tasks and then hitting limits during the workflows that actually matter.

Overproduction. Generating more AI output than is needed. Asking for 2,000 words when 200 would do. Running a full analysis on a question that could have been answered with a quick lookup. Producing ten variations of a piece of copy when the brief only called for three. Every surplus token is overproduction.

Overprocessing. Using a frontier model for a task that a simpler, cheaper model could handle perfectly well. The AI industry calls this the "Big Model Fallacy": the assumption that every query needs the most powerful model available. A classification task that a lightweight model could handle in milliseconds gets routed to a frontier model that costs fifty times more per token. That is overprocessing in its purest form.

Defects. Hallucinations. Inaccurate outputs that require rework or regeneration. Responses that do not meet the brief because the input was poorly structured. Every defective AI output that has to be discarded and regenerated is a defect in lean terms: resources consumed, no value delivered, and additional resources required to fix it.

Skills. Not using AI at all when it could add value. This is Huang's point, turned through a lean lens. An organisation that pays for AI tools but whose people lack the training, confidence, or permission to use them is wasting the potential of both the technology and the workforce. It is the same waste Ohno's Western successors identified: underutilised human potential, now compounded by underutilised machine potential.

So why add a ninth?

If token waste maps so neatly onto the existing eight, why not just say "apply TIMWOODS to your AI workflows" and leave it there?

Because the same argument could have been made about Skills in the 1990s. Underutilised talent shows up as overprocessing (management doing work the team could do better), as defects (errors caused by disengaged workers), and as waiting (decisions bottlenecked at the top). The eighth waste could have been absorbed into the existing seven. But practitioners recognised that it represented something fundamentally different: a category of resource, human knowledge and creativity, that the physical-process framework was not built to see.

Tokens present the same challenge. They are invisible. They leave no physical trace. There is no pile of scrap material on the factory floor, no warehouse full of unsold stock, no forklift making unnecessary trips across the building. Token waste happens inside software, inside API calls, inside workflows that most managers never see and most finance teams cannot yet track. According to Larridin, 81% of leaders say AI investments are difficult to quantify, and 79% report that untracked AI budgets are becoming a growing accounting concern.

A waste you cannot see requires a name. Giving it one forces the conversation. It puts token consumption on the same footing as the physical wastes that lean practitioners have been trained to identify for decades. It says: this is a real resource, it has a real cost, and wasting it is not inevitable. It is a process problem. And process problems are what lean exists to solve.

There is also an environmental dimension worth acknowledging. AI infrastructure consumes significant and growing amounts of energy and water. There are compelling arguments for AI's role in addressing the climate crisis, and compelling arguments that its energy footprint is making it worse. Regardless of the balance of those two arguments, wasteful token consumption only puts a finger on the scale in the wrong direction. Efficient use is not just a cost discipline. It is a resource discipline in the fullest sense.

The flywheel connection

If you have read my previous piece on what SpaceX can teach UK modular housebuilding, the underlying logic here will be familiar. Max Olson's insight, that atoms are cheap but process is pricey, applies directly. The cost of a single AI token is negligible. The cost of a poorly designed process that consumes thousands of tokens without producing proportionate value is not.

Olson's essay makes a related point that matters here: atoms are cheap, but bits are cheaper. Modelling a prototype digitally costs a fraction of building one physically. Running ten design iterations through an AI tool costs a fraction of running one through a workshop. This is the same flywheel logic from my previous piece on SpaceX: lower costs enable more iteration, more iteration accelerates learning, and faster learning compounds into better products. Tokens spent on that cycle are not waste. They are fuel. The discipline is in making sure the fuel goes into the engine rather than onto the floor.

The organisations that will manage this well are the ones that treat AI consumption the way lean manufacturers treat any other input: with visibility, measurement, and a relentless focus on eliminating non-value-adding activity. Not by restricting use. Not by rationing access. But by building processes that are efficient by design, so that AI budgets are spent well, every token consumed moves the work forward, and the flywheel spins faster because of it.

That means prompt discipline: knowing what you want before you ask, structuring the input so the output lands first time. It means model selection: matching the tool to the task rather than defaulting to the most powerful option. It means workflow design: building AI into processes deliberately rather than layering it on top of existing workflows and hoping for the best. And it means measurement: knowing what you spend, where you spend it, and what value it produces.

None of this requires a large budget. None of it requires specialist AI expertise. It requires the same mindset that lean has always demanded: look at the process, find the waste, and remove it. The resource is new but the discipline is not.

Where to start

The first time I hit a rate limit mid-workflow because I had burned through my allowance on low-priority tasks that morning, I realised I had no visibility over where my usage was actually going. I was not short of AI access. I was short of discipline in how I used it. That is the question worth starting with: do you know where yours is going?

In the UK, only around a quarter of businesses were using AI by late 2025, and the government's own research found that 60% cited limited skills as a key barrier. Most SMEs are not yet spending heavily on tokens. But usage is only going one way and the businesses that build lean habits around AI consumption now, while the stakes are low and the costs are modest, will be the ones that manage it effectively when it scales.

The eighth waste was added to TIMWOODS because lean practitioners recognised that the framework needed to evolve with the context it was applied to. The ninth waste, Tokens, is the same recognition applied to the age of AI. The organisations that see it early will build the habits that make AI a compounding advantage. The ones that do not will look back and wonder where the budget went and why the operational gains never arrived.

Nine wastes. Same discipline.

George Palmer is the founder of Make Advisory, helping UK manufacturers and SMEs find the operational improvements already sitting in their businesses. If you want to talk about how lean thinking applies to your AI adoption, get in touch.

Sources

Taiichi Ohno and the Toyota Production System: The 8 Wastes of Lean

Max Olson, Atoms Are Cheap, Process Is Pricey: futureblind.com

Jensen Huang on token budgets, GTC 2026: Tom's Hardware, CNBC

Deloitte, The Pivot to Tokenomics: Navigating AI's New Spend Dynamics: Deloitte Insights

AI inference cost paradox and enterprise spend data: Oplexa

AI usage and token visibility: Larridin

UK AI adoption data: ONS Business Insights Survey, British Chambers of Commerce / Paragon Bank, March 2026

UK Government AI Adoption Research, February 2026: GOV.UK

Artefact, Is AI Really Getting Cheaper? The Token Cost Illusion: Artefact